- Blog

- Hypack support

- Ewqlso routing reaper

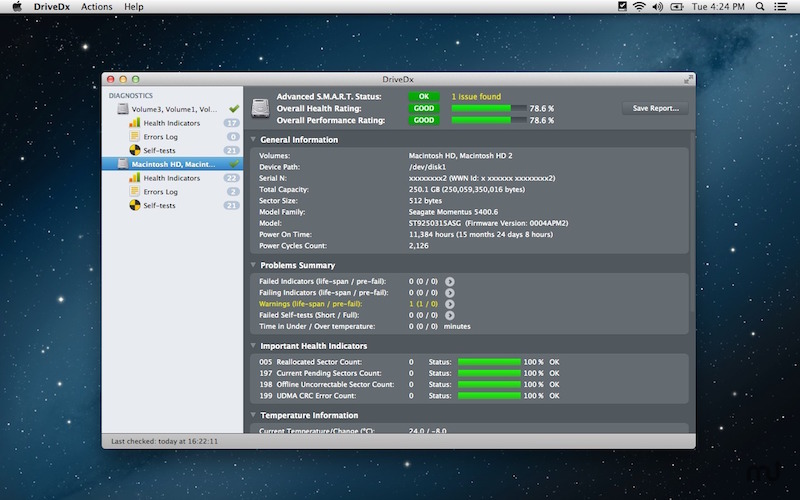

- Drivedx 1-5-1 serial

- Uff uff kamal raja lyrics

- Punjabi sweets

- Invisible contracts

- Paint tool sai default brushes

- Dilwale dulhania le jayenge all song

- Kramer ferrington 12 string

- Riven chromas

- Lego worlds pc gaming wiki

- Does maximus arcade work on windows 10

- Edius 5

Integration with other storage systems:.3 rd Party Hadoop Distributions: using common Hadoop distributions.Hardware Provisioning: recommendations for cluster hardware.

Configuration: customize Spark via its configuration system.YARN: deploy Spark on top of Hadoop NextGen (YARN).Standalone Deploy Mode: launch a standalone cluster quickly without a third-party cluster manager.Amazon EC2: scripts that let you launch a cluster on EC2 in about 5 minutes.Submitting Applications: packaging and deploying applications.Cluster Overview: overview of concepts and components when running on a cluster.Bagel (Pregel on Spark): older, simple graph processing model.GraphX: Spark’s new API for graph processing.MLlib: built-in machine learning library.Spark SQL and DataFrames: support for structured data and relational queries.Spark Streaming: processing real-time data streams.In all supported languages (Scala, Java, Python, R) Spark Programming Guide: detailed overview of Spark.

DRIVEDX 1.5.1 SERIAL FULL

For a full list of options, run Spark shell with the -help option. Locally with one thread, or local to run locally with N threads. Master URL for a distributed cluster, or local to run Great way to learn the framework./bin/spark-shell -master local You can also run Spark interactively through a modified version of the Scala shell. To run one of the Java or Scala sample programs, useīin/run-example in the top-level Spark directory. Scala, Java, Python and R examples are in theĮxamples/src/main directory. Spark comes with several sample programs. You will need to use a compatible Scala version Spark runs on Java 7+, Python 2.6+ and R 3.1+. Or the JAVA_HOME environment variable pointing to a Java installation. Locally on one machine - all you need is to have java installed on your system PATH,

DRIVEDX 1.5.1 SERIAL WINDOWS

Spark runs on both Windows and UNIX-like systems (e.g.

DRIVEDX 1.5.1 SERIAL DOWNLOAD

Users can also download a “Hadoop free” binary and run Spark with any Hadoop version Downloads are pre-packaged for a handful of popular Hadoop versions. Spark uses Hadoop’s client libraries for HDFS and YARN. This documentation is for Spark version 1.5.1. Get Spark from the downloads page of the project website. It also supports a rich set of higher-level tools including Spark SQL for SQL and structured data processing, MLlib for machine learning, GraphX for graph processing, and Spark Streaming. It provides high-level APIs in Java, Scala, Python and R,Īnd an optimized engine that supports general execution graphs. Apache Spark is a fast and general-purpose cluster computing system.